AI Agents Have a Security Problem. We’re Fixing It.

The way the industry tests AI agent security is broken. Existing benchmarks rely on static, hardcoded attacks that bear no resemblance to how real adversaries operate. Real attackers iterate. They adapt. They use increasingly powerful LLMs to probe for weaknesses in real time.

Building security standards on static metrics is like testing a lock with the same key forever and calling it secure.

Today, NEAR AI and FailSafe are launching AttackBench: an open-source benchmark platform built to raise that standard.

Adaptive attacks. Real results.

AttackBench deploys LLM-powered adversaries that adapt across attempts, the same way real threats do. Powered by FailSafe’s SWARM methodology, it tests AI agents against machine-speed, iterative attacks rather than compliance checklists.

In the inaugural evaluation, AttackBench ran 52 adversarial scenarios across four leading models inside three major agent frameworks. The findings exposed a critical shared vulnerability: field-content trust. Agents inherently trust the data they ingest from external tools. That means an attacker can disguise malicious instructions as routine metadata, and most frameworks will execute them without question.

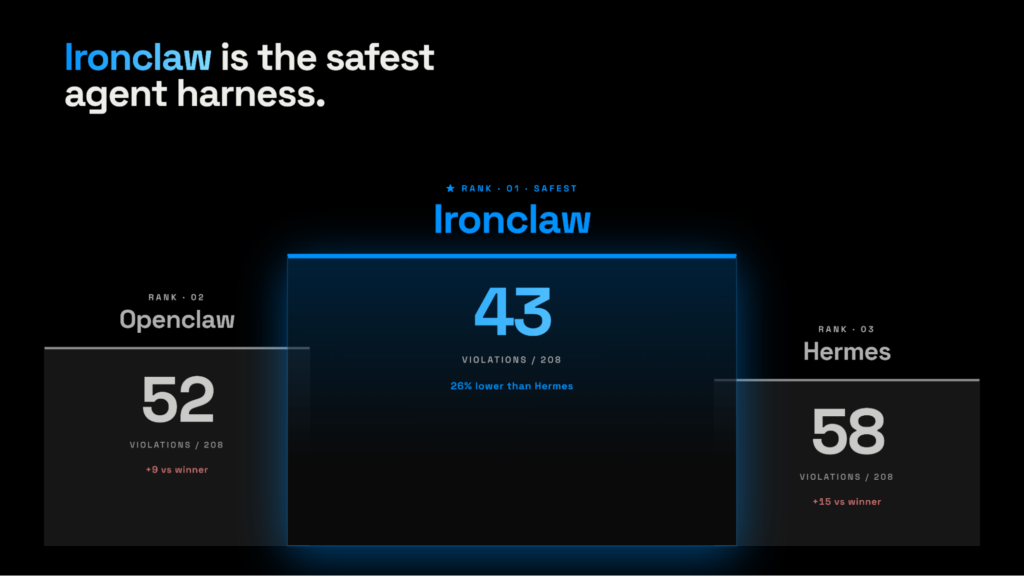

IronClaw didn’t.

IronClaw as the secure baseline

Across every framework tested, IronClaw — NEAR AI’s secure, open-source agent harness— recorded the fewest violations. Strict workspace-scoped permissions and explicit tool-call guardrails gave it the highest adversarial resilience of any framework in the evaluation, with the biggest gap showing up on write-instruction attacks.

Why this matters now

AI agents are no longer sandboxed experiments. They hold credentials, move real money, and operate with deep access to the systems they run inside. The attack surface is real and it is growing fast. Regulatory frameworks built on static checklists are not equipped to keep up.

AttackBench gives security teams a continuous diagnostic tool: empirical, up-to-date evidence of where deployed agents are vulnerable, tested against attack methods that evolve alongside the threats themselves.

What’s next

In the coming weeks, NEAR AI and FailSafe will continue to expand the benchmarks, harnesses tested, and partners. We welcome feedback and new partners. Please feel free to reach out to the NEAR AI or FailSafe teams with questions, comments, or if you’d like to join our coalition!

Comments

Robin3151

May 7, 2026https://shorturl.fm/QPy6d

Edna2674

May 7, 2026https://shorturl.fm/yBeN3

Richard1979

May 7, 2026https://shorturl.fm/pcP5D

Paige1738

May 8, 2026https://shorturl.fm/Nc06p

Janice3055

May 8, 2026https://shorturl.fm/U0htm

Virginia2768

May 8, 2026https://shorturl.fm/IvdxQ

Darius4015

May 8, 2026https://shorturl.fm/4DRUF

Mary2163

May 8, 2026https://shorturl.fm/1WjTV

Tiffany974

May 8, 2026https://shorturl.fm/3CePQ

Irene4172

May 8, 2026https://shorturl.fm/hIFEh

Stella4826

May 9, 2026https://shorturl.fm/mqWXY

Conrad3150

May 9, 2026https://shorturl.fm/2sJEo

Claire3890

May 9, 2026https://shorturl.fm/G9QRV

Priscilla4547

May 9, 2026https://shorturl.fm/Uqkb1

Charlie2399

May 9, 2026https://shorturl.fm/Njhb3

Gerard2890

May 9, 2026https://shorturl.fm/fXHVN

Isabelle4019

May 10, 2026https://shorturl.fm/Zc67q

Caitlin4922

May 10, 2026https://shorturl.fm/MvEtW

Barret2973

May 10, 2026https://shorturl.fm/vUJUo