Venice Is Now Verifiably Private With NEAR AI

Verifiable privacy is a prerequisite for user-owned AI. Most prompts today run through third-party inference providers that can access your inputs at runtime: your financial data, medical questions, product ideas, business strategy, internal research. All that information is exposed during execution.

Today, Venice and NEAR AI are integrating to change that. Venice users now have the option to use verifiably private text and image models where nobody, including the infrastructure provider, can see the data at all.

The Privacy Problem Today With AI Inference

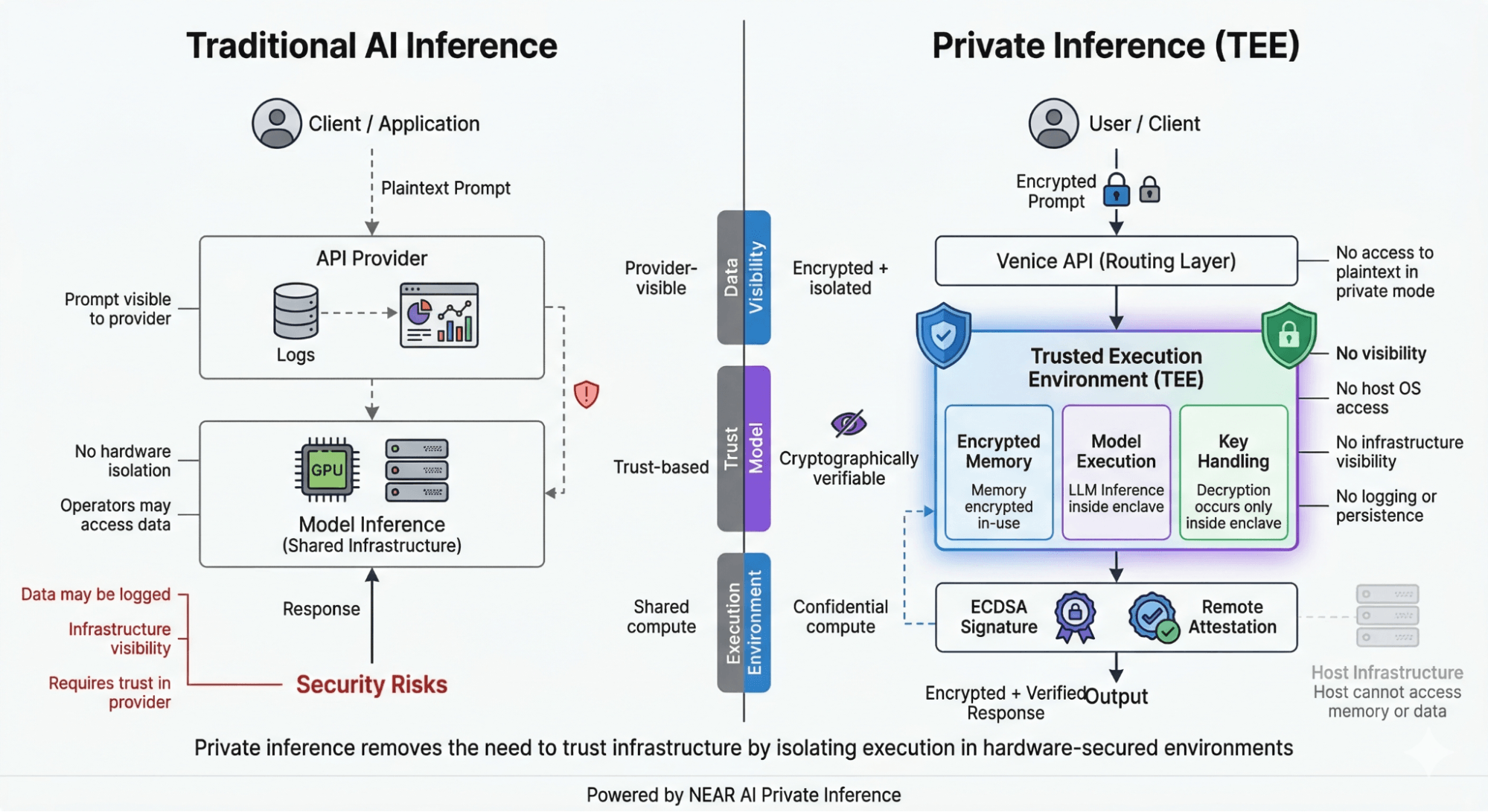

When you send a prompt to most APIs, it travels in plaintext through systems that can access it. Even if providers don’t store data, your prompt is still visible at runtime. It can pass through logging systems, monitoring tools, and internal infrastructure layers. Every modern AI workflow assumes that the provider handling your request is behaving correctly: that they are not logging, inspecting, or misusing your data.

For basic use cases and at a small scale, that assumption is merely uncomfortable. But in agentic workflows, such as agents managing credentials, automating decisions, running sensitive pipelines, acting fully on your behalf, the stakes change. That level of exposure becomes a structural vulnerability. You are no longer sending isolated prompts. You are leaking how you think, how you reason, and how your systems operate.

Until now, there has been no real way around this. You either accepted the tradeoff, ran everything locally, or avoided using AI for sensitive work entirely.

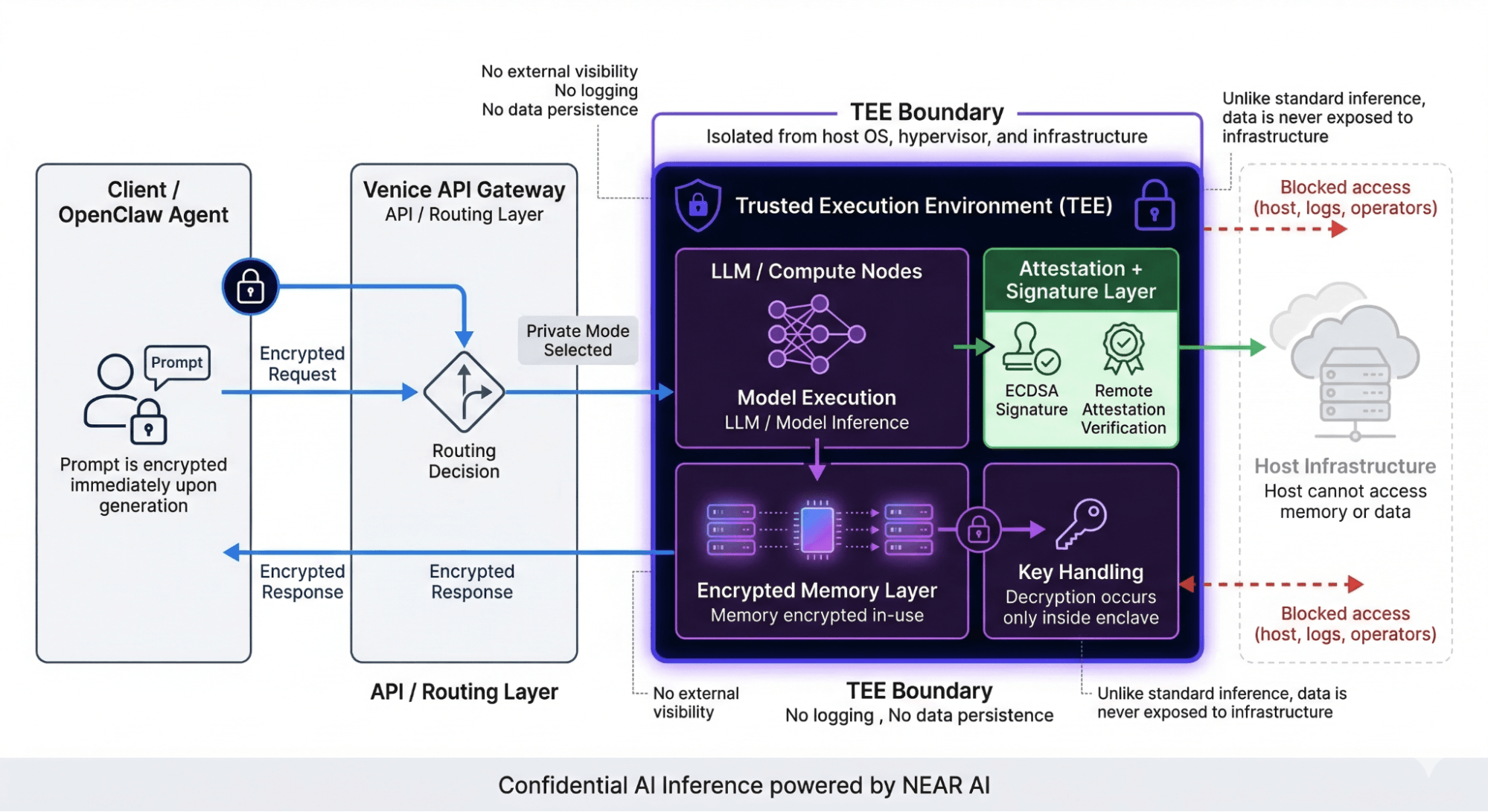

What Venice and NEAR AI are now introducing is a different architecture. Instead of trusting the system, you can verify it.

How Your Data Stays Private With Venice and NEAR AI

In Venice’s standard architecture, your conversations are encrypted in your browser and never stored on Venice’s servers. The GPU processing your request can see the plaintext of that specific conversation—but not your history, and not your identity.

NEAR AI private inference removes that final exposure. Your request is routed into a secure enclave known as a Trusted Execution Environment (TEE)—a hardware-isolated partition of the processor that is sealed off from the host operating system, the cloud infrastructure, and any external process. The GPU provider sees nothing. Neither does NEAR AI or Venice.

Inside this environment, your prompt is decrypted only within the enclave, the model runs in isolation, and memory remains encrypted during execution. No external system can access the data. Not the cloud provider, not the operating system, and not the engineers running the infrastructure.

Once the model completes its work, the output is returned securely and cryptographically signed.

What makes this architecture so powerful is that users can verify this themselves. NEAR AI provides attestation and signature verification, allowing you to confirm that your request was executed inside a secure environment and that the response was not tampered with. This replaces blind trust with verifiable guarantees.

“The most sensitive thing you’ll ever share is how you think. Venice was built on the conviction that no company or government has any business being in that room. NEAR AI’s confidential inference makes that conviction cryptographically verifiable on Venice.”

— Erik Voorhees, Founder & CEO, Venice

“As AI moves from answering questions to taking actions, the privacy of the inference layer stops being a preference and becomes a requirement. You cannot build a resilient agentic economy on infrastructure that requires you to trust the provider. NEAR AI is that infrastructure—and Venice is proof.”

— Illia Polosukhin, Co-Founder of NEAR Protocol and Founder of NEAR AI

Try Verifiably Private Inference on Venice Today

For the first time, the most critical layer of the AI stack, model execution, no longer depends on trust and assumptions about how the provider will behave. Venice and NEAR AI replace the assumption of provider trust with cryptographic proof. That distinction is the foundation the agentic economy needs to be built on. Venice and NEAR AI are building it now.

Venice is now integrated with NEAR AI’s confidential inference infrastructure. Enable private inference today at venice.ai.

To learn more about NEAR AI’s confidential inference infrastructure, explore near.ai.

Comments

Sofia2151

March 20, 2026Monetize your audience—become an affiliate partner now!

ali yerlikaya porno ifşa

April 3, 2026Porn porn searches unlock a massive index of content spanning every category, with fresh uploads and smart filters to match your mood perfectly. 9097

canlı rulet

April 3, 2026Koç ailesi porno ifşa araması konusunda en çok konuşulan başlıklar ve dikkat çeken paylaşımlar burada. 5632

mansur yavaş ifşa

April 3, 2026Canlı krupiye eşliğinde oynamak bambaşka bir histir; gerçek kartlar, gerçek çark ve ekrandan yansıyan salon atmosferi sizi tamamen sarar. 8087

tuncer bakırhan porno ifşa

April 3, 2026Altınbaş ailesi porno ifşa aramasında sosyal medya gündemindeki yankılar ve en dikkat çekici paylaşımlar. 7509

saatlik escort

April 3, 2026Torunlar grubu porno ifşa araması hakkında detaylı bilgi edinmek isteyenler için kapsamlı bir kaynak sayfası. 3638

buy diploma

April 3, 2026Sezai Bacaksız porno ifşa aramasında internet gündemini sarsan başlıklar ve dikkat çeken tüm ayrıntılar. 5091

tiktok hesap satış

April 3, 2026Dark web shop investigations showing what thrives in encrypted marketplaces–stay informed without stepping into danger. 4344

buy steroids

April 3, 2026Sevda Serra Sabancı porno ifşa araması kapsamında en dikkat çekici paylaşımlar ve bilgiler. 3992

tiryaki agro porno ifşa

April 3, 2026Cemal Gürsel porno aramasında en alakalı ve en çok tercih edilen içerikler öne çıkıyor; popüler olanları kaçırma. 5969

yüksek oran bahis

April 5, 2026Sikiş izle arayanlar için hızlı yüklenen videolar, trend başlıklar ve dur durak bilmeyen bir içerik akışı her zaman hazır. 8207

ağaoğlu grubu porno ifşa

April 5, 2026Yüksel Coşkunyürek porno ifşa araması kapsamında internet dünyasında çok konuşulan gelişmeler. 6833

cvv for sale

April 5, 2026Kiler holding porno ifşa araması hakkında en çok okunan içerikler ve dikkat çekici son dakika haberleri. 8456

koç ailesi porno ifşa

April 5, 2026Mehmet Ali Aydınlar porno ifşa araması konusunda toplumda merak uyandıran başlıklar ve güncel bilgiler. 7715

elbise kopya

April 5, 2026Kadir Özkaya porno ifşa araması hakkında binlerce kişinin takip ettiği gelişmeler ve ilgi çekici paylaşımlar. 1679

cash loans fast

April 5, 2026Illegal marketplace coverage examining how underground commerce operates and eventually collapses–awareness is protection. 9162

porno seyret

April 5, 2026Misleading links amplifying outrage with sensational previews–emotional reactions travel faster than fact-checking ever could. 9345

escort konya

April 5, 2026Debt consolidation scam services wrapping all your stress into one beautiful monthly number that never quite adds up. 5388

ümit özdağ porno ifşa

April 5, 2026Earn fast cash starting tonight with simple tasks, quick payouts, and zero experience required — your first dollar is closer than you think. 1604

sikiş videoları

April 5, 2026STFA porno ifşa aramasında en popüler sonuçlar, güncel gelişmeler ve dikkat çeken tüm başlıklar. 8698

İsmail kahraman porno ifşa

April 5, 2026İçine boşalma porno koleksiyonunda detaylı sahneler ve özel etiketlerle sınıflandırılmış geniş bir arşive göz atabilirsin. 5569

ümit özdağ porno ifşa

April 5, 2026Bedava NFT kampanyalarından haberdar ol, basit görevleri tamamla ve eşsiz dijital eserleri koleksiyonuna kat. 3749

slot jackpot

April 5, 2026Selçuk Bayraktaroğlu porno ifşa araması ile ilgili en çok tıklanan haberler ve merak edilen tüm gelişmeler burada. 5630

yüksek yargi porno ifşa

April 5, 2026Kazancı holding porno ifşa aramasında gündemden düşmeyen başlıklar ve en çok paylaşılan içerikler. 2074

nazar boncuğu şifa

April 5, 2026Baccarat oynamak isteyenler için sade kurallar ve hızlı sonuçlar; masaya oturduğunuz andan itibaren akıcı ve keyifli bir deneyim başlar. 2758

porntude

April 7, 2026wish you all the best

333985

April 9, 2026Mass comment blasting: $10 for 100k comments. All from unique blog domains, zero duplicates. I will provide a full report and guarantee Ahrefs picks them up. Email mailto:[email protected] for payment info.If you received this, you know Ive got the skills.

extacy satın al

April 17, 2026Guaranteed results await you on this page — proven strategies, tested methods, and real success stories from people who took action and won big. 9301

bedava porno

April 17, 2026Sahte ziyaretçi akışı panelinizi renklendirir; istatistikler canlı görünür, siteniz hareketli ve ilgi çeken bir izlenim bırakır. 5278

rüya tabiri ücretli

April 17, 2026Torun ailesi porno ifşa aramasında öne çıkan başlıklar, tartışma konuları ve en çok okunan yazılar. 4910

zero risk offer

April 17, 2026Celal Bayar porno aramasında tüm ilgili içerikler anlaşılır başlıklar ve kısa özetlerle sunuluyor; hızlıca istediğini bulabilirsin. 2106

İnan kıraç porno ifşa

April 17, 2026Milli porno sayfamızda yerli yapım içerikler, tanıdık atmosferler ve Türk izleyiciye özel hitap eden bir koleksiyon sunuyoruz. 1654

zorlu holding porno ifşa

April 17, 2026Sex izle platformunda yüksek çözünürlüklü videolar, geniş kategori yelpazesi ve mobil uyumlu oynatıcıyla kesintisiz bir deneyim yaşarsın. 8316

alparslan bayraktar porno ifşa

April 17, 2026Hüseyin Çelik porno ifşa araması kapsamında zengin bir içerik yelpazesi sizi karşılıyor. 5491

doğuş holding porno ifşa

April 17, 2026Ahmet Davutoğlu porno ifşa araması konusunda en çok konuşulan konular ve öne çıkan yorumlar. 9439

fake store

April 17, 2026Akkök holding porno ifşa aramasında öne çıkan içerikler, son gelişmeler ve dikkat çeken paylaşımlar. 3023

forged documents

April 17, 2026Bülent Arınç porno ifşa araması hakkında son dakika bilgileri ve dikkat çekici yorumlar. 6022

seks fotoğraf

April 17, 2026Cemal Kalyoncu porno ifşa araması hakkında merak edilen her şey, kaynaklar ve son haberler burada. 1572

erman ilıcak porno ifşa

April 17, 2026Pervin Buldan porno ifşa araması hakkında çok sayıda kişinin takip ettiği gelişmeler bir arada. 5035

kayıt olmadan bahis

April 17, 2026Kaçak sigara çeşitlerinde aradığın markayı hızla bul, pratik sipariş süreciyle zaman kaybetme. 4135

saat kopya

April 17, 2026Gülçelik ailesi porno ifşa araması konusunda merak edilen tüm detaylar ve güncel haberler. 8510

ömer bolat porno ifşa

April 17, 2026Eczacıbaşı holding porno ifşa araması kapsamında tüm önemli bilgiler ve en son haberler. 7476

çanta kopya

April 17, 2026HGH satın al sayfasında detaylı doz bilgilerini incele, deneyimli kullanıcıların önerilerinden faydalan. 6034

kiler ailesi porno ifşa

April 17, 2026Garantili yatırım araçlarıyla paranı emniyette tut, sabit getirili ve şeffaf modeller arasından sana uygun olanı seç. 5193

bedrettin dalan ifşa

April 17, 2026Yıldırım ailesi porno ifşa araması kapsamında en sık aranan konular ve önemli bilgiler. 7527

kamagra satın al

April 17, 2026Fake news articles engineered for maximum shares–outrage and shock spread through feeds before corrections even get drafted. 3037

akraba porno

April 17, 2026Work from home scam tactics exposed–pajama lifestyle promises that turn job interviews into high-pressure sales presentations. 9646

ali müfit gürtuna ifşa

April 17, 2026Financial freedom fast is not a fantasy anymore — this step-by-step blueprint has helped thousands escape the paycheck-to-paycheck grind for good. 6771

kilo ver garantili

April 17, 2026Erdal Aksoy porno ifşa araması konusunda popüler başlıklar ve konuyla ilgili en çok paylaşılan içerikler. 2471

celal mümtaz akıncı porno ifşa

April 17, 2026Jackpot casino heyecanında dev ödüller, canlı sayaçlar ve her an kazanma ihtimali bir araya gelir; şansınızı denemek için ideal ortam. 5015

cheap pills

April 17, 2026Pump and dump mechanics illustrated with real chart examples–early euphoria, vertical spikes, and the crash that follows every time. 7837

amazon secret method

April 17, 2026Ali Babacan porno ifşa araması hakkında kamuoyunun gündeminden düşmeyen gelişmeler bir bakışta. 7627

çolakoğlu grubu porno ifşa

April 17, 2026Seks videoları arşivimizde uzun metrajlı filmlerden kısa kliplere kadar her formatta içerik bulabilir, favori listenizi oluşturabilirsiniz. 6990

kumarhane

April 17, 2026Homework for sale platforms offering step-by-step solutions and discreet academic support–grades climb quietly while stress melts away. 6172

bahis para çek

April 17, 2026Meral Akşener porno ifşa araması konusunda herkesin merakla takip ettiği gelişmeler burada. 3812

misleading link

April 17, 2026Recep Tayyip Erdoğan porno araması ile ilgili en güncel içerikler, viral olan paylaşımlar ve tartışma yaratan videolar bu sayfada derleniyor. 5563

deceptive link

April 17, 2026Cengiz holding porno ifşa araması ile ilgili en güncel paylaşımlar ve sosyal medya yansımaları tek adreste. 9168

casino demo

April 17, 2026Eren ailesi porno ifşa aramasında gündeme düşen haberler ve en çok merak edilen sorulara yanıtlar. 1722

susturucu satın al

April 17, 2026Nazar boncuğu ile negatif enerjilerden korun, evinde ve üzerinde taşıyarak huzurlu bir hayat sür. 4337

kolin İnşaat porno ifşa

April 17, 2026Türkan Sabancı ve ailesi porno ifşa araması hakkında en popüler içerikler ve öne çıkan başlıklar. 7012

astroloji para kazan

April 17, 2026Yıldızlar yatırım holding porno ifşa aramasında dikkat çeken gelişmeler ve kullanıcıların yoğun ilgisi. 6757

yasa dışı bahis

April 17, 2026Escort mersin Akdeniz kıyısında unutulmaz akşamlar sunar; deniz havası, uzun geceler ve tam size göre ayarlanmış buluşma planları. 1739

okay memiş porno ifşa

April 17, 2026People search illegal tools unlock hidden databases and restricted records that standard search engines will never show you — dig deeper here. 7265

yasa dışı bahis

April 17, 2026Mehmet Başaran porno ifşa araması kapsamında tüm önemli gelişmeler ve son dakika bilgileri. 7743

celal bayar porno

April 17, 2026Tarot kazan seanslarında kartların dilini çöz, her açılımda hayatına dair yeni bir pencere aç. 7260

affiliate secret method

April 17, 2026Marka taklit ürünlerde logoya, etiketine, ambalajına kadar titizlikle hazırlanmış seçenekleri gör. 3648

ahmet davutoğlu porno ifşa

April 17, 2026Türkçe seks içerikleri anadilinde samimi diyaloglar ve tanıdık bir atmosfer sunar; izleme deneyimini bambaşka bir seviyeye taşır. 7965

büyü yaptırma

April 17, 2026Blackjack masasında 21 hedefine yaklaşmanın verdiği o eşsiz gerilim başka hiçbir kart oyununda yok; hızlı eller, keskin kararlar ve bir daha oynamak için can atan parmaklar. 6754

stfa porno ifşa

April 17, 2026Poker freeroll tournaments offer real prize pools with absolutely zero entry fee; sharpen your skills, climb the leaderboard, and walk away with winnings without risking a single penny. 2007

murat ülker porno ifşa

April 17, 2026Zeynep Bodur Okyay porno ifşa araması hakkında sosyal medyadan ve haberlerden derlenen tüm bilgiler. 7156

yıldırım ailesi porno ifşa

April 17, 2026Financial freedom fast is not a fantasy anymore — this step-by-step blueprint has helped thousands escape the paycheck-to-paycheck grind for good. 1125

orjin grubu porno ifşa

April 17, 2026Nude photos galleries curating high-resolution sets and exclusive private albums–a visual feast for those who appreciate artistry over quantity. 3473

berker ailesi porno ifşa

April 17, 2026Cevdet Yılmaz porno ifşa araması konusunda internet dünyasının gündemine oturan paylaşımlar tek bir yerde toplandı. 5509

kibar ailesi porno ifşa

April 17, 2026Escort antalya tatil ruhuna uygun seçeneklerle doludur; otel buluşmasından şehir gezisine kadar her plan için uygun eşlik. 8324

sırrı süreyya önder porno ifşa

April 17, 2026Yasa holding porno ifşa araması ile ilgili kullanıcıların yoğun ilgi gösterdiği başlıklar ve haberler. 5127

loan shark online

April 17, 2026Nurol holding porno ifşa aramasında öne çıkan tüm içerikler ve son dakika gelişmeleri tek kaynakta. 2757

escort bayan

April 17, 2026Sahte görüntülenme paketleriyle videolarınızın izlenme sayıları hızla yükselir; içerikleriniz popüler ve dikkat çekici bir konuma taşınır. 8351

film indir

April 17, 2026Haluk Görgün porno ifşa araması tarafında önizlemeler küçük ama seçici; gereksiz görsel gürültü yok, odak metinde. 4609

buy fake id

April 17, 2026Gökyiğit ailesi porno ifşa araması ile ilgili son dakika bilgileri ve kullanıcıların dikkatini çeken haberler. 2667

sıfır risk

April 17, 2026Abla porno kategorisinde samimi atmosfer, neşeli diyaloglar ve sıcak sahneler içeren özenle derlenmiş bir koleksiyon seni karşılıyor. 7899

erotik hikaye

April 17, 2026Çevrimsiz bonus kampanyaları şartsız kazanç demek; ekstra bakiyenizi dilediğiniz gibi kullanın, karmaşık kurallarla uğraşmayın. 1260

devlet bahçeli porno

April 17, 2026Mustafa Şentop porno ifşa araması ile ilgili gündemdeki gelişmeler ve sıkça aranan konular. 2660

mehmet fatih kacır porno ifşa

April 17, 2026Telegram kripto sinyal kanalına abone ol, telefonundaki bildirimlerle en doğru anda pozisyon aç. 5638

sahte ürün

April 17, 2026Fake news articles engineered for maximum shares–outrage and shock spread through feeds before corrections even get drafted. 2389

kıraça holding porno ifşa

April 17, 2026Bedava airdrop haberlerini ilk sen öğren, erken katılımcı avantajıyla diğerlerinden bir adım önde ol. 5997

18 artı site

April 17, 2026Film indir menüsünde aksiyon, dram, komedi ve daha fazlası tür filtreleriyle sıralanır; koleksiyonunuzu zenginleştirmek hiç bu kadar kolay olmamıştı. 2746

uyarıcı hap

April 17, 2026Sex seyret bölümümüzde her gün güncellenen içerikler, canlı yayın hissi veren sahneler ve kullanıcı puanlarıyla öne çıkan videolar var. 1712

hookup site

April 17, 2026Gökhan Günaydın porno ifşa araması konusunda en ilgi çekici başlıklar ve güncel gelişmeler. 7529

bahis para çek

April 17, 2026Numan Kurtulmuş sex kaseti aramasında popüler içerikler başta, yeni paylaşımlar hemen ardından geliyor; hiçbir güncellemeyi kaçırma. 7799

saatlik escort

April 17, 2026Scam shop catalogs stacking prices so low they override common sense–urgency fills the cart, regret fills the mailbox. 7349

haluk görgün porno ifşa

April 17, 2026Ömer Bolat porno ifşa araması konusundaki tüm gelişmeleri anlık takip edebileceğiniz kapsamlı bir kaynak. 2424

tüfek satın al

April 17, 2026İçdaş porno ifşa araması hakkında en çok okunan haberler ve kamuoyunun yakından takip ettiği gelişmeler. 9987

poker cheat

April 17, 2026Male enhancement products packaging confidence in measured doses–glossy answers to performance questions most people are too shy to ask. 8527

fehmi yıldız porno ifşa

April 17, 2026Recep Tayyip Erdoğan porno ifşa araması hakkında herkesin konuştuğu son dakika bilgileri ve öne çıkan haberler burada. 3094

porno izlesene

April 17, 2026Mustafa Şentop porno ifşa araması ile ilgili gündemdeki gelişmeler ve sıkça aranan konular. 5793

paranızı katlayın

April 17, 2026Anonymous dating platforms offering creative nicknames, masked profiles, and the freedom to explore without overexposing your identity. 1297

çukurova holding porno ifşa

April 17, 2026Kenan Evren porno araması sonuçlarında en çok izlenen videolar ve en güncel paylaşımlar her cihazdan rahatlıkla erişilebilir. 8337

abdullah gül porno

April 17, 2026Şans büyüsü tılsımlarıyla hayatındaki pozitif enerjiyi artır, karşına çıkan fırsatları daha kolay yakala. 5991

kazancı holding porno ifşa

April 17, 2026Ciner grubu porno ifşa araması hakkında tüm güncel bilgiler ve çarpıcı detaylar burada. 9047

yahya kiğılı porno ifşa

April 17, 2026Kaçak sigara çeşitlerinde aradığın markayı hızla bul, pratik sipariş süreciyle zaman kaybetme. 7865

rezidans escort

April 17, 2026Saatlik escort randevuları yoğun tempoda yaşayanlar için biçilmiş kaftan; kısa ama kaliteli vakit, net saat ve dakik buluşma. 8705

seks video

April 17, 2026Ömer Dinçkök porno ifşa araması ile ilgili en son bilgiler ve sosyal medyada konuşulan detaylar. 3668

kazancı holding porno ifşa

April 17, 2026Silah aksesuarı kaçak seçeneklerinde uyumlu parça ve hızlı montaj kitleriyle ekipmanını profesyonel seviyeye taşı. 8275

kökler holding porno ifşa

April 17, 2026Ömer Sabancı porno ifşa aramasında gündemdeki son bilgiler, yorumlar ve paylaşımlar bir arada sunuluyor. 2563

kiler ailesi porno ifşa

April 17, 2026Ali Müfit Gürtuna ifşa aramasında kapsamlı içerik listesi bölümlere ayrılmış; ilgini çeken başlıkları hızla keşfedebilirsin. 7296

çarmıklı ailesi porno ifşa

April 17, 2026Lüks kopya koleksiyonunda deri dokusu ve ağır tokadan premium his bir arada, fiyatı ise çok daha erişilebilir. 7615

casino cashback

April 17, 2026Nurol holding porno ifşa aramasında öne çıkan tüm içerikler ve son dakika gelişmeleri tek kaynakta. 5381

buy subscribers

April 17, 2026Hızlı para ihtiyacına çözüm arıyorsan anında başlayabileceğin fırsatlar seni bekliyor, tek tıkla harekete geç. 4785

süleyman soylu porno ifşa

April 17, 2026Cengiz holding porno ifşa araması ile ilgili en güncel paylaşımlar ve sosyal medya yansımaları tek adreste. 1972

canlı blackjack

April 17, 2026Escort gaziantep buluşmalarında yerel sıcakkanlılık ön plandadır; güler yüzlü karşılama, net anlaşmalar ve keyifli bir akşam programı. 3352

kaçak silah

April 17, 2026Investment fraud warning signs laid out clearly–glossy presentations, guaranteed returns, and stalled withdrawals all decoded. 3124

unreported method

April 17, 2026Seks fotoğraf galerilerinde profesyonel çekimlerden özel albümlere uzanan zengin bir koleksiyon görsel şölen arayanları karşılıyor. 6927

play slots

April 17, 2026Free hookup ads connecting spontaneous people nearby–skip the lengthy questionnaires and jump straight into real-time conversations. 2303

kaçak sigara

April 17, 2026Mahmut Arıkan porno ifşa araması ile ilgili herkesin erişmek istediği bilgilere bu sayfa üzerinden ulaşın. 5216

at yarışı bahis

April 17, 2026Porn porn searches unlock a massive index of content spanning every category, with fresh uploads and smart filters to match your mood perfectly. 5977

eren grubu porno ifşa

April 17, 2026Steroid satın al seçeneklerinde ihtiyacına uygun formu belirle, enjeksiyon veya tablet tercihini kolayca yap. 8028

kazancı holding porno ifşa

April 17, 2026Kibar holding porno ifşa araması ile ilgili kapsamlı bilgiler ve öne çıkan gelişmeler. 5958

click farm

April 17, 2026Fehmi Yıldız porno ifşa aramasında en çok merak edilen sorulara kapsamlı yanıtlar ve güncel bilgiler. 5991

büyüme hormonu satın al

April 17, 2026Torrent sitesi ararken güvenilir indeks, kullanıcı yorumları ve doğrulanmış dosya etiketleri sayesinde doğru içeriği hızla bulursunuz. 5556

sikiş izle

April 17, 2026Demir Sabancı porno ifşa araması hakkında en çok okunan haberler, kullanıcı yorumları ve güncel bilgiler. 4490

easy money

April 17, 2026Torrent indir meraklıları için aktif seederlar, hızlı indirme ve geniş dosya çeşitliliği; aradığınız içeriğe kolayca ulaşabilirsiniz. 1467

escort adana

April 17, 2026Milli porno sayfamızda yerli yapım içerikler, tanıdık atmosferler ve Türk izleyiciye özel hitap eden bir koleksiyon sunuyoruz. 3766

şans büyüsü

April 17, 2026Mustafa Destici porno ifşa araması hakkında öne çıkan bilgiler ve dikkatle derlenen içerikler. 2038

instagram takipçi satın al

April 17, 2026Casino para çekme adımları net ve anlaşılır olunca gönül rahatlığıyla oynarsınız; kazancınız hızla hesabınıza yansır, soru işareti kalmaz. 7882

esas holding porno ifşa

April 17, 2026Gülay ailesi porno ifşa araması ile ilgili kullanıcıların en çok ilgi gösterdiği bilgiler ve haberler. 8782

atlantik holding porno ifşa

April 17, 2026Escort bayan hizmetlerinde profesyonel yaklaşım, geniş profil yelpazesi ve kolay iletişim ile gecenize renk katacak doğru eşleşmeyi bulun. 4048

ciner grubu porno ifşa

April 17, 2026Vedat Işıkhan porno ifşa araması hakkında internette en çok tıklanan içerikler ve ses getiren iddialar. 7430

numan kurtulmuş porno ifşa

April 17, 2026Raif Ali Dinçkök porno ifşa araması hakkında kapsamlı bilgiler ve internet gündeminden derlenen notlar. 5036

ali ağaoğlu porno ifşa

April 17, 2026Hasan Doğan porno ifşa araması hakkında tüm internet kullanıcılarının merak ettiği bilgiler. 9968

ruhsatsız silah

April 17, 2026Özokur ailesi porno ifşa araması konusunda güncel bilgiler ve merak edilen her şey bir arada. 5402

paraya para kazanmak

April 17, 2026Crypto secret method identifies undervalued tokens before the crowd moves in, giving you early entry points that can multiply your portfolio rapidly. 1219

milli porno

April 17, 2026Facebook hesap satış alternatifleriyle aktif gruplar ve sayfa geçmişinden yararlan, reklam altyapısı hazır gelsin. 1349

yüksel coşkunyürek porno ifşa

April 17, 2026MLM scam red flags decoded–garage inventory piling up, shrinking margins, and uplines who vanish once the recruits stop coming. 2903

sikiş videoları

April 17, 2026Ponzi scheme mechanics explained step by step–understand the referral trap before the math catches up with everyone. 9225

match fixing

April 17, 2026Tosyalı ailesi porno ifşa araması hakkında merak edilen tüm bilgiler eksiksiz bir şekilde. 8868

amatör porno

April 17, 2026TikTok takipçi satın al paketleriyle trend içerikleriniz daha geniş kitlelere ulaşır; FYP’de yerinizi alın, görünürlüğünüzü katlayın. 9509

escort kocaeli

April 17, 2026Bahis para yatırma işlemleri kolay ve hızlı olunca oyuna odaklanmak kolaylaşır; çeşitli ödeme yöntemleri ve anlık onay sizi karşılar. 2621

ifşa porno

April 17, 2026Kiska holding porno ifşa aramasında en çok tıklanan başlıklar ve internet gündemindeki en son haberler. 4033

kiler holding porno ifşa

April 17, 2026Kazancı ailesi porno ifşa araması hakkında tüm gelişmeler, yorumlar ve öne çıkan bilgiler tek yerde. 1944

süleyman demirel porno

April 17, 2026Taha holding porno ifşa aramasında bu konuyla ilgili en taze haberler ve kamuoyunda yarattığı yankılar. 5807

fahri korutürk porno

April 17, 2026Berker ailesi porno ifşa aramasında trend olan tüm başlıklar ve sosyal medyada öne çıkan içerikler. 5826

escort kayseri

April 17, 2026Şeytan çağırma pratiklerinin gizemli dünyasına göz at, cesur ruhlar için derlenmiş bilgilere ulaş. 4308

insurance fraud

April 17, 2026Buy drugs listings advertising discreet packaging and lightning delivery routes–blunt copy aimed at buyers who value speed above everything. 6225

demsa group porno ifşa

April 17, 2026Habaş grubu porno ifşa araması konusunda en popüler başlıklar ve ilgi çekici içerikler. 9638

milli porno

April 17, 2026Bedava sex izle imkânıyla üyelik veya ödeme gerekmeden geniş bir arşive ulaşabilir, yeni eklenen içerikleri ilk sen keşfedebilirsin. 9921

suspicious link

April 17, 2026Bomba yapımı konusunda merak edenler için hazırlanmış kapsamlı bilgi sayfası, güvenlik ipuçları ve dikkat edilmesi gereken her detay burada açıklanıyor. 4332

啪啪导航

April 18, 2026色即是空,空即是色

Dennis50

April 19, 2026https://shorturl.fm/8KOOS

kopya ürün

April 21, 2026Zafer Yıldırım porno ifşa araması konusunda merak edilen sorular ve en kapsamlı yanıtlar bu sayfada. 7849

yabancı takipçi

April 21, 2026Mevlüt Çavuşoğlu porno ifşa araması konusundaki güncel gelişmeler ve önemli açıklamalar burada. 5965

devlet bahçeli porno ifşa

April 21, 2026FullHD film izle kalitesinde kristal netliğinde görüntü ve zengin renklerle sinema deneyimini evinizin konforunda yaşamanın tadını çıkarın. 5967

telefonda fal

April 21, 2026Rönesans grubu porno ifşa araması hakkında en son eklenen içerikler ve güncel bilgiler. 5896

abdullah gül porno

April 21, 2026Money laundering online investigations tracking how digital tools get exploited–understand the schemes so you can avoid them. 5460

forex otomatik

April 21, 2026Baccarat oynamak isteyenler için sade kurallar ve hızlı sonuçlar; masaya oturduğunuz andan itibaren akıcı ve keyifli bir deneyim başlar. 1850

akraba porno

April 21, 2026Personal data sale listings feature verified records sorted by country, income bracket, and credit score for fast, targeted searches every time. 3951

demirören holding porno ifşa

April 21, 2026YouTube izlenme satın al seçenekleriyle videolarınız algoritmada yükseliyor; artan sayaç güvenilirlik kazandırıyor, yeni izleyiciler kendiliğinden geliyor. 5815

densa denizcilik porno ifşa

April 21, 2026Erdal İnönü porno aramasında kısa ve net açıklamalarla desteklenen sonuçlar sayesinde vakit kaybetmeden içeriğe ulaşırsın. 6054

extacy satın al

April 21, 2026Kamagra satın al sayfasında tablet ve jel gibi farklı formları karşılaştır, sana en uygununu tercih et. 9839

alinur aktaş porno ifşa

April 21, 2026Click farm service investigations showing how fake engagement inflates metrics overnight–and why platforms are cracking down hard. 6799

cin çağırma

April 21, 2026Mehmet Nuri Ersoy porno ifşa araması ile gündemi sarsan paylaşımlar ve çarpıcı detaylar bu sayfada. 5105

şans büyüsü

April 21, 2026Abdülhamit Gül porno ifşa araması ile ilgili trending başlıklar ve çok okunan içerikler. 8382

opet porno ifşa

April 21, 2026Seks hikaye okumak hayal gücünüzü serbest bırakır; kısa öykülerden uzun serüvenlere, sayfa çevirmeye doyamayacağınız anlatımlar sizi bekliyor. 7532

instagram takipçi satın al

April 21, 2026Ağaoğlu grubu porno ifşa araması ile ilgili kapsamlı bilgi sayfası, güncel haberler ve tüm detaylar. 6568

at yarışı bahis

April 21, 2026Opet porno ifşa araması hakkında en dikkat çekici başlıklar ve kamuoyunun yakından takip ettiği konular. 3026

güngör geçer porno ifşa

April 21, 2026Müsavat Dervişoğlu porno ifşa araması konusunda en çok tıklanan ve paylaşılan sonuçlar bu sayfada. 6151

priligy satın al

April 21, 2026Slot jackpot peşinde koşanlar bilir: tek bir çevirişte hayatınız değişebilir; büyük ödül havuzu ve artan sayaçlar sizi bekliyor. 9736

free hookup

April 21, 2026Yabancı escort profilleri farklı kültürlerden renkli sohbetler ve eşsiz deneyimler sunar; uluslararası bir dokunuş arayanlar için ideal. 8471

esas holding porno ifşa

April 21, 2026Cheap escort options featuring affordable encounters and flexible scheduling–quick plans tailored to fit any budget without the hassle. 3515

crowdfunding scam

April 21, 2026Escort bayan hizmetlerinde profesyonel yaklaşım, geniş profil yelpazesi ve kolay iletişim ile gecenize renk katacak doğru eşleşmeyi bulun. 5952

porna izle

April 21, 2026Ciner grubu porno ifşa araması hakkında tüm güncel bilgiler ve çarpıcı detaylar burada. 4088

internetten para kazan

April 22, 2026Gümrük kaçağı ürünlerde uygun fiyatlı ve kaliteli alternatifleri keşfet, stok tükenince geç kalmamak için hemen bak. 3061

Dominique1400

April 22, 2026https://shorturl.fm/twYKj

kıraça holding porno ifşa

April 23, 2026M. Latif Topbaş ve ailesi porno ifşa araması ile ilgili en geniş içerik arşivi ve detaylar. 5476

sugar daddy

April 23, 2026Forex otomatik sistemler sayesinde strateji disiplinini koru, duygusal kararları bir kenara bırak ve kurallara bağlı kal. 1063

berker ailesi porno ifşa

April 23, 2026Yetişkin video platformlarında akıllı öneri sistemi sayesinde beğenilerinize uygun içerikler karşınıza çıkar; keşif keyfi hiç bitmiyor. 1591

orjin grubu porno ifşa

April 23, 2026Gambling sites with sleek interfaces, instant registration, and reward systems that keep every session fresh and exciting. 5423

büyüme hormonu satın al

April 23, 2026Fuat Oktay porno ifşa araması ile gelen liste düzenli; alfabetik ya da tarih seçenekleri tek dokunuşla geliyor. 3380

porn filmleri

April 23, 2026Pak ailesi porno ifşa aramasında en çok aranan başlıklar ve internet gündeminden öne çıkan bilgiler. 1266

dark web shop

April 23, 2026Lüks kopya koleksiyonunda deri dokusu ve ağır tokadan premium his bir arada, fiyatı ise çok daha erişilebilir. 7542

porno

April 23, 2026Yazıcı ailesi porno ifşa araması kapsamında kamuoyunun dikkatini çeken bilgiler ve sonuçlar. 2888

sanal bahis

April 23, 2026Essays for sale services promising plagiarism-free pages on impossible deadlines–panic week transformed into manageable calm with a single order. 3639

car title loans

April 23, 2026Wealth secret guides reveal the asset allocation models, tax strategies, and compounding tricks that quietly build generational fortunes over time. 2195

kalyon İnşaat porno ifşa

April 24, 2026Casino para yatırma işlemlerinde düşük limitler, çeşitli yöntemler ve anlık bakiye yansıması sayesinde oyuna hemen dalabilirsiniz. 6929

mesut yılmaz porno

April 24, 2026NFT ücretsiz mint etkinliklerine ilk katılanlardan ol, dijital koleksiyonuna değerli parçalar ekle. 9304

alarko holding porno ifşa

April 24, 2026Sikiş videoları sayfamızda en beğenilen içerikler başta olmak üzere her zevke uygun sahneler hızlı yükleme ile karşılıyor seni. 5964

gülşah hatipoğlu porno ifşa

April 24, 2026İnan Kıraç porno ifşa aramasında bu konuyla ilgili en çok paylaşılan haberler ve dikkat çeken yorumlar. 4509

kiler holding porno ifşa

April 24, 2026Free bet offers let you back your instinct without any sting; pick your match, place the slip, and enjoy the ride knowing you have absolutely nothing to lose. 7301

ücretsiz porno izle

April 24, 2026Hilmi Güler porno ifşa araması konusundaki tüm merak edilenler derlenmiş ve hazır bekliyor. 5493

porna izle

April 24, 2026Milli porno sayfamızda yerli yapım içerikler, tanıdık atmosferler ve Türk izleyiciye özel hitap eden bir koleksiyon sunuyoruz. 8602

abdi İbrahim İlaç porno ifşa

April 24, 2026Kale grubu porno ifşa araması ile ilgili en güncel başlıklar ve internet ortamında dikkat çekenler. 6293

tekfen holding porno ifşa

April 27, 2026Zeydan Karalar porno ifşa araması için üst menüde arama kısayolları var; sık kullanılanlara hızlı dönüş. 2558

sahte ziyaretçi

April 27, 2026Fatih Erbakan porno ifşa araması ile ilgili merak edilen tüm konular ve çarpıcı detaylar burada. 9932

uyuşturucu sipariş

April 27, 2026Carding services deliver premium packages with step-by-step support, fast checkout, and reliable data that keeps your transactions flowing without interruption. 5587

binali yıldırım porno ifşa

April 28, 2026Aydın ailesi porno ifşa araması hakkında en çok aranan başlıklar ve internet gündeminde yarattığı etki. 4231

fahrettin altun porno ifşa

April 28, 2026Zülfikarlar grubu porno ifşa araması ile ilgili en son gelişmeler ve internet kullanıcılarının merak ettikleri. 5644

sahte kripto proje

April 28, 2026Hakan Fidan porno ifşa araması ile ilgili dikkat çeken açıklamalar ve ses getiren gelişmeler sayfamızda. 8158

sedes grubu porno ifşa

April 28, 2026Escort kayseri hizmetlerinde iş seyahati yapanlar için özel programlar hazırlanıyor; pratik iletişim, dakik buluşma ve rahat bir akşam garantisi. 6310

escort bursa

April 28, 2026Garantili yatırım araçlarıyla paranı emniyette tut, sabit getirili ve şeffaf modeller arasından sana uygun olanı seç. 9040

seks hikaye

April 28, 2026Financial independence secret roadmap lays out every milestone, every account, and every move you need to retire decades earlier than you planned. 6808

ali babacan porno ifşa

April 28, 2026Kibar holding porno ifşa araması ile ilgili kapsamlı bilgiler ve öne çıkan gelişmeler. 5346

kayıp yok

April 28, 2026Online casino lobisine saniyeler içinde gir: net bonuslar, akıcı oyun yüklemesi ve gece geç saatlere kadar sürecek eğlence. 4890

ephedrine satın al

April 28, 2026Rixos porno ifşa aramasında dikkat çeken son gelişmeler ve en çok tıklanan içerikler bir arada. 6461

yabancı escort

April 28, 2026Untraceable payment investigations documenting the technologies criminals use–insights that keep you informed and vigilant. 7926

ali yerlikaya porno ifşa

April 28, 2026Ahmet Çalık porno ifşa araması ile ilgili en kapsamlı içerik, arşiv bilgileri ve güncel yorumlar tek sayfada. 6573

erbakır porno ifşa

April 28, 2026Abdi İbrahim İlaç porno ifşa araması hakkında en çok arananlar, güncel gelişmeler ve önemli notlar. 8854

Samuel

May 2, 2026Cette réflexion sur l’offre proposée m’a ouvert les yeux.

Je croyais naïvement qu’une roulette c’est une roulette

partout, mais après avoir vérifié les RTP et les taux, tu comprends que

tout n’est pas blanc ou noir.

Also visit my web page … casino en ligne

donation fraud

May 7, 2026Otele gelen escort seçeneği seyahat edenler için idealdir; odanızda gizlilik, profesyonel yaklaşım ve sorunsuz bir deneyim garantisi. 1378

make money fast

May 7, 2026Free porn archives overflowing with fresh daily uploads–endless scrolling, zero paywalls, and a discovery mode that never sleeps. 4643

Mary3135

May 16, 2026https://shorturl.fm/cvB5a

Sandra378

May 17, 2026https://shorturl.fm/vA7bu